DeepSeek V4 Launch: 1M Context, Flash & Pro, OpenAI-Compatible API

DeepSeek V4

Quietly, unhurriedly, without fuss, the Chinese released their long-awaited updated flagship in 4 versions

I’ll stoke interest: it beat the new Kimi K2.6

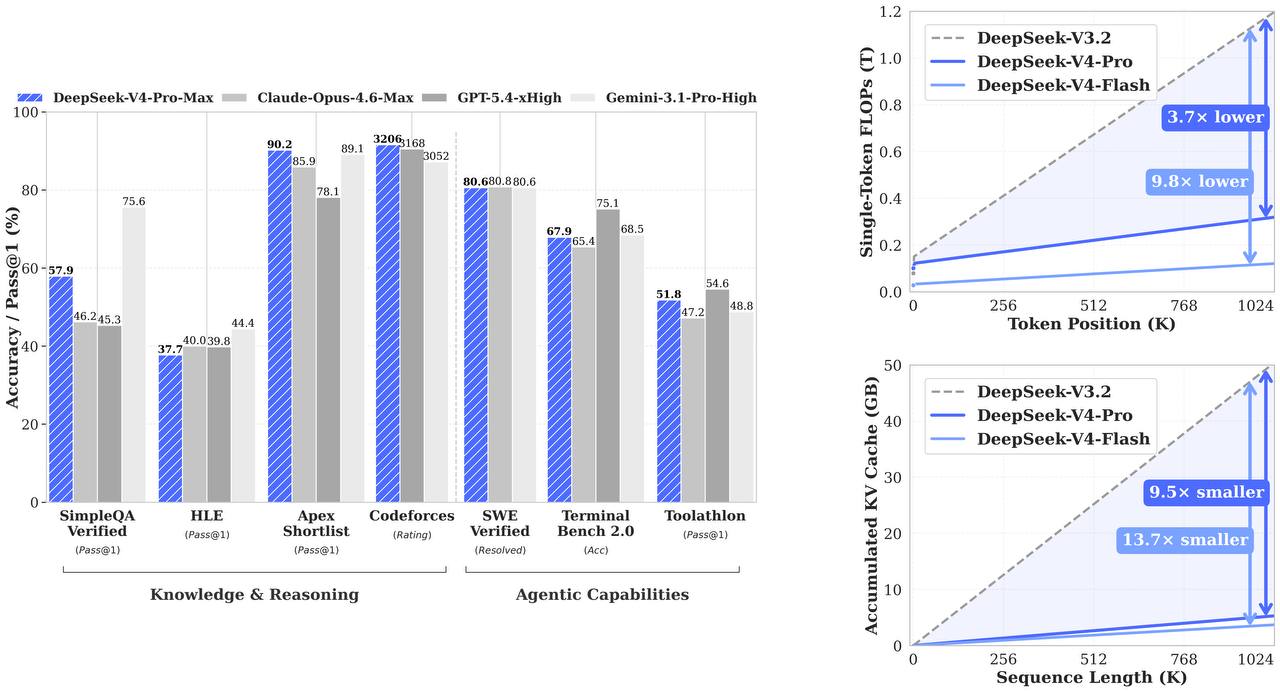

DeepSeek-V4-Flash and DeepSeek-V4-Pro. Both support context up to 1M tokens, maximum output up to 384K + tool calls and JSON output, meaning this isn’t just a smarter chat, but a model designed from the start for serious product and agent scenarios

The models have thinking mode enabled by default; the mode can be turned off and switched to non-thinking if speed is needed

The result is a product for production. Compatible with OpenAI- and Anthropic API formats, and the old names deepseek-chat and deepseek-reasoner are gradually being tied to deepseek-v4-flash modes

Cost — $0.14/$0.28 per 1M tokens for Flash and $1.74/$3.48 for Pro

They write that you can already try it in the web version